Rendered #1

Unreal Engine 5 Announced, Virtual Production + The Madalorian, Face Replacement in The Irishman, Magic Leap Layoffs, and more

Welcome to the very first edition of Rendered!

Thanks to everyone who shared the initial announcement and got the word out! Since that initial announcement, it seems like everyday something exciting is happening, making it hard to publish because more exciting things always seem right around the corner. But! We’ve got to start somewhere, and to that end I’m happy to bring you the first newsletter! As a reminder, Rendered won’t just be collecting news that happens between newsletters, but is meant to capture both recent happenings as well as anything from the past few months/year that are relevant to what’s going on now.

Additionally, I’m happy to announce that the indefatigable Or Fleisher (of Volume.gl and so many others) is joining me for this newsletter to bring some insight into a selection of research papers we think are exciting that hint at the future of where our collective industries are heading. So let’s get into it!

— Kyle

To get in contact about sponsoring a future issue, please reach out here.

News

Unreal Engine 5 Announced

“Hundreds of Billions of Triangles”

Speaking of perpetually-occurring exciting news, this huge announcement hit right as I was about to publish this newsletter! As such, it’s fresh off the press, and we don’t have a ton of details, but there are two major announced features:

Lumen is a fully dynamic global illumination solution that immediately reacts to scene and light changes.

To quote an Epic employee from the announcement stream, “[light] baking is a thing of the past”. This is definitely exciting, but what has more of my interest, especially for its potential implications for volumetric rendering, is the engine’s second flagship feature, Nanite.

Nanite virtualized micropolygon geometry frees artists to create as much geometric detail as the eye can see. Nanite virtualized geometry means that film-quality source art comprising hundreds of millions or billions of polygons can be imported directly into Unreal Engine—anything from ZBrush sculpts to photogrammetry scans to CAD data—and it just works. Nanite geometry is streamed and scaled in real time so there are no more polygon count budgets, polygon memory budgets, or draw count budgets; there is no need to bake details to normal maps or manually author LODs; and there is no loss in quality.

I expect we’ll hear more details at SIGGRAPH this year, but I have one major thought. Volumetric Capture Rendering (different from volumetric rendering), in its most naïve implementations, is expensive. I mean this in all interpretations of the word, but want to narrow in on its implications for the computer that is trying to render the capture, specifically as its rendering relates to the budget (basically, how much stuff you can have in a game before running into performance issues) typically allotted for game assets.

Oculus recommends that, for any given frame of your application, your total number of polygons in that scene is somewhere between 50,000 (Quest/Go) - 2,000,000 (Rift) for VR development. A naïve, static 3D scan of a human can easily be 250K polygons alone. This means, if you want to try use this asset in a game engine, that character can easily take up a significant, if not all, of your frame budget.

But imagine you want more than a static capture and want to have a proper sequence of scans so your character can move/talk/etc. (what we think of when we say “volumetric capture” or “volumetric playback”). You’re playing back maybe only a single asset per frame, so your frame budget is unchanged (though still too high), but, assuming the above file size, just five seconds of capture inside of your project can bloat the size of your executable substantially. You aren’t just storing a single 250K poly capture, you’re storing 150 individual captures (30 (fps) * 5 (seconds)) meant to be played back in sequence. At a lowball estimate of 200MB per capture frame, and you’re looking at an executable size of ~30GB (150 * 200MB) for 5 seconds of an empty scene with only a single capture in it. On Oculus Quest, the file size for your whole application is limited to less than 1GB. But obviously you want to have the character move around/speak etc., and that will take far more than just five seconds. And if they are talking to someone else? Double that. 60GB just to have two people talk to each other for five seconds. There’s clearly a problem.

However, we still see volumetric content in VR. What’s going on? A few things.

The problem above can be broken down into two major parts, asset size and asset playback cost.

Most volumetric companies are focused on the first part of this problem, which is trying to bring down the asset size. As much as people want to tout how their stage is able to output 600GB/s, everybody knows nobody can use that much data in a production context, so the general idea is to capture the most data and then compress that into the smallest size (“compression ratio”).

Some companies generate smaller meshes, some companies generate binary files, and some (and this seems to be the emerging way), forgo using meshes as the “transfer format” and opt to use something much more transferrable, video.

The key innovation here is that, what really matters isn’t how the asset is transferred, but how it is displayed. Volumetric captures need a format that is based around time. Mesh sequences obviously work for this, but are giant. Video, however, is highly transferrable, and very small. A frame of video is a few MB tops. Compare this to our lowball mesh size, and this means video is 200x efficient at encoding.

As an example, Metastage, a Microsoft Mixed Reality Capture Studio partner, recently uploaded a lot of their captured assets to the Unity Asset Store, and thankfully included some public tech specs. Their asset “Jim - Serious Talking”, is only 90MB for ~20 seconds of capture.

This method has its downsides though, as it places the emphasis on the encoding/rendering of the asset to portray an accurate version of what was captured. This is complicated. Meshes are “easier” in that you know exactly what something is supposed to look like because the format is literally meant to represent the model of something.

Notably, neither of these methods really change the issue of frame budget. If you use a video or a mesh, you still need to show the information stored in that, which means still rendering a potentially very dense mesh.

I.e. The Jim asset linked above is still at a 20K polycount per frame. It has a significantly smaller filesize than using a mesh as the transfer format, but its polycount, while lower, is still substantial.

Why use meshes at all then? If video is smaller, more transferrable, etc., and has the same render cost, why do people still uses meshes? It’s because of one very important fact:

Meshes are very easy to generate.

Encoding a volumetric capture into a video is an advanced skillset. But anyone with a camera and a copy of Metashape/RealityCapture can produce a 3D scan of an object. Add in a few more cameras, and now they can make high-detailed, suboptimal volumetric captures! People are doing this now, but inevitably get stuck when they realize they can’t import a 500GB capture into Unreal/Unity.

Until now…maybe. If what Unreal says about Nanite is true, it could be a major boon for not just all the DIY capture stages that are unable to invest in refinement/compression/etc. technology, but means that large stages have an easier path to publishing because they can just use their large meshes instead of doing a lot of work to bring them down to a more reasonable size (and risk quality in the process). Said differently, playback (at least in Unreal + PS5), may be a bit of a non-issue now.

This also means quality capture is now within everyone’s grasp. So many large volumetric companies whole model is based around the pursuit of quality at the expense of file size, and if file size doesn’t matter anymore, quality becomes a lot easier to achieve for everyone. It’s no secret that Metashape/RealityCapture can produce high quality videogrammetry assets, and if you no longer have to effectively/intelligently decimate that to make it game-ready, you just cut out a lot of the space that typically stood between garage volumetric stages and the biggest ones in the world.

Additionally, because we can now use giant meshes, I think videogames in general will now be much more interested in volumetric capture. Before, mesh sequences were too big to really fit in any game (and games that do use scans use a singe scan and re-rig it for animation), and video as a sequential format actually doesn’t play that nicely with the realtime context of a game engine (and hence turned people away). But if a game studio can “just use meshes”, and studios can just “give them meshes”, then we can finally get some volcap in games. (Side note: I think this also represents a big opportunity for companies investing in volumetric retargeting functionality).

I’m dreaming big here and in full conjecture mode, but I’m excited about what this may unlock for people. We obviously still don’t know a lot about the details (does it compress imported meshes?? What about the web??), but I’m excited.

iPad Pro Gets a LiDAR Sensor

New depth frameworks in iPadOS combine depth points measured by the LiDAR Scanner, data from both cameras and motion sensors, and is enhanced by computer vision algorithms on the A12Z Bionic for a more detailed understanding of a scene.

If there was ever a doubt that “spatial computing” wasn’t yet here, let this be the ringing bell to announce that it indeed has arrived. I think everyone recognized that the sensor-fusion based approaches for 3D scanning with an iPad were acceptable at best (why else make an attachable depth sensor?), but I don’t think anyone actually thought Apple would take the next step to give their flagship device a proper LiDAR sensor. But here we are.

Opinions seem mixed, but mostly from the “It’s not that great!” angle. Which, obviously. Anyone looking to this to replace a Velodyne or something comparable would be looking in the wrong direction. Instead, I think this should be seen as an indicator of what’s to come, not just taken at face value. Besides, people are getting cool stuff out of this, they just aren’t mounting them to the tops of their cars for DIY autonomous vehicles… yet.

What’s also interesting about this is how it reconfigures some of the reality capture market on iOS/iPad OS. Before, companies needed to do a lot of their own sensor fusion to make similar functionality work. With past releases of ARKit and now the LiDAR sensor, Apple seems to be slowly eating the bottom rung of these apps. The hope here is that it raises the ceiling of what the apps that leverage these features can accomplish, but given that we’re still seemingly stuck in a place where the best (read: profitable) uses of facial tracking + scanning are just placing a fake couch in your room to see how it looks next to your ficus or trying out different Instagram filters, it’s easy to see a future where Apple throws up its hands and just offers these things natively.

Magic Leap Lays Off Significant % of Employees

For a while now people have been talking about the post-hype slump of VR. We’re beyond grand talk of the “empathy machine” and are in the process of crashing into a few harsh realities that come from no longer being the golden child of tech:

Headsets are expensive in the best of times

Premium experiences can require a lot of computer power, so the ask for a casual observer is to buy not only a headset but also an expensive gaming PC (“Which one? How? Do I build it? What’s a driver?”)

Nobody knows what “content” is supposed to look like.

Revenue is rising but it’s not hockey-sticking like a lot of people who invested in VR would like to see.

I think that the amount of hype generated by people with the will to endure steep headset prices, buggy software, sub-par experiences, etc., was enough to make the world turn to look at VR for a bit and be interested, but the world now seems to have mostly turned and looked in a different direction.

The revenue for all XR headsets combined since 2018 is 3.2b. Magic Leap raised, in total, around 2.6b. The idea that the Magic Leap headset was somehow going to be bigger than the market it existed in is the sort of sideways group-think that leads people to continually invest in a moonshot idea in hopes that it becomes too big to fail. Add in an over-promised and under-delivered core product, a global pandemic, and an an industry not trending up as fast as expected, and something like this is bound to happen sooner or later.

We all knew it wasn’t going to work out, but I think we also all wanted to believe it would. Not just from a company or financial angle, but the promise (or better, the dream) of what was being offered was, and still is, compelling. It’s also why Magic Leap was able to continually bring in great people to work at the company despite the looming reality of the situation, missed deadlines, and bad signs. Magic Leap cultivated a brilliant group of people working in XR space, and a lot of these people are now without jobs. Luckily for us, they’ve organized all of themselves into a massive spreadsheet! There’s nearly 250 people in the spreadsheet below, all experienced in the XR space and all looking to be hired (and many willing to relocate!) after being laid off from Magic Leap. If you’re hiring, definitely take a look at the list and try to bring some of these wonderful people into your fold.

Magic Leap Employees Spreadsheet

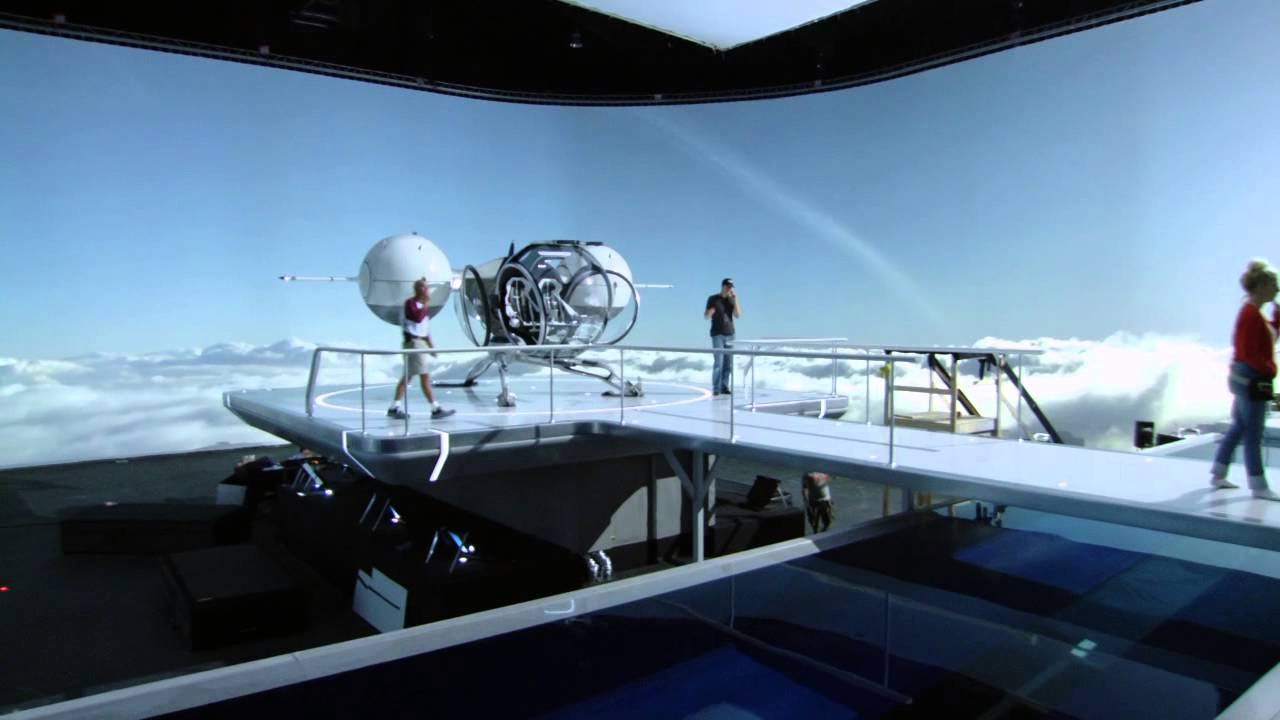

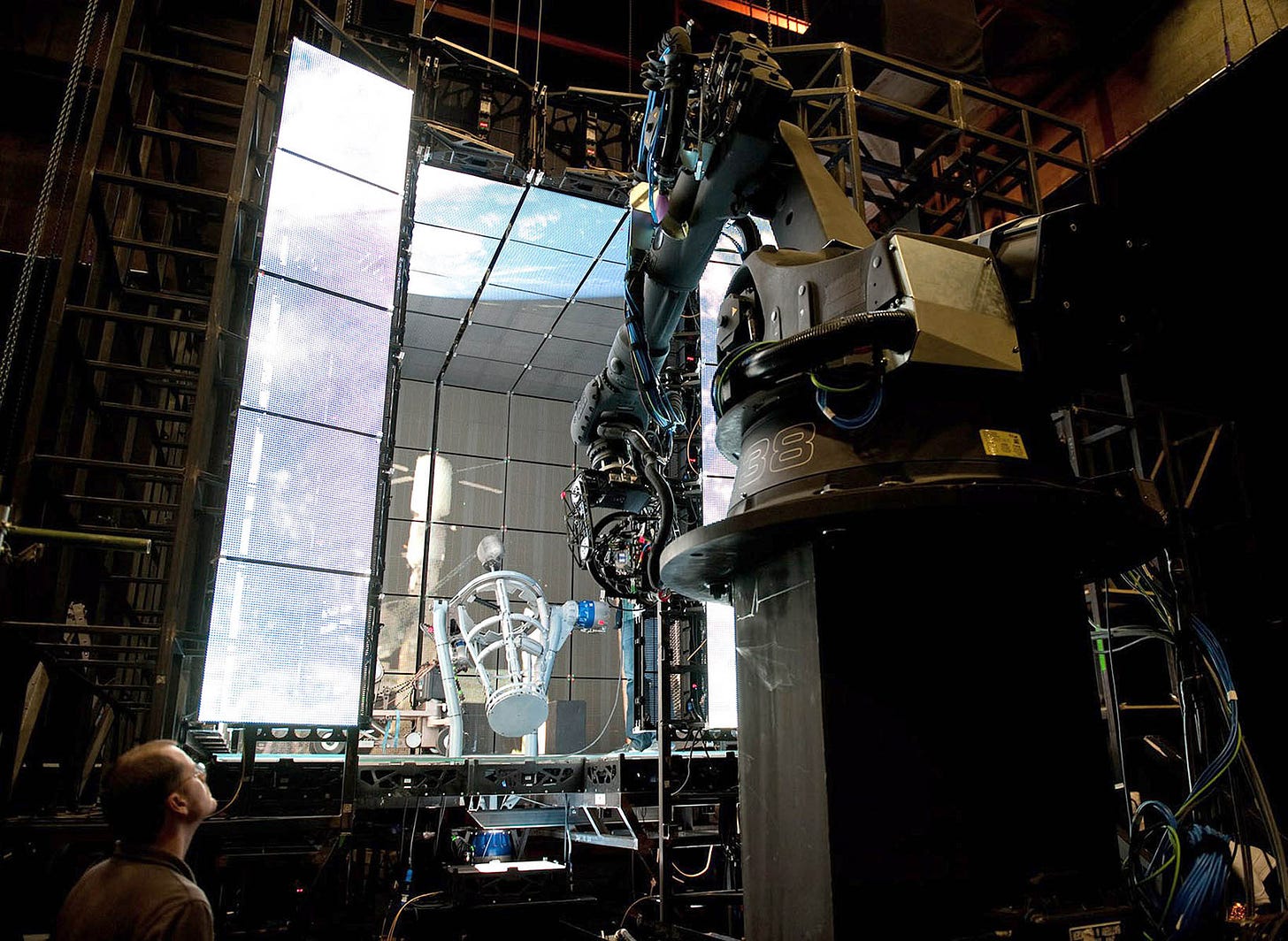

Huge Virtual Production Set Used for The Mandalorian

One of the biggest things to happen in the virtual production space in the past year was the release of Disney’s The Mandalorian and subsequent press circuit touting how it was made.

There’s more detail in the linked article above, but I think a key thing to note here is that the principles of what this set is have been around for a while. The primary innovation of virtual production (in my eyes) is only one thing: tracking a camera’s position in space. The effusiveness of the press circuit around the tech here may make you think that The Mandalorian stage is offering more than that, but that’s essentially it: a tracked camera and some giant LED screens.

When you know where your camera is at in space, you can do a lot of things. The main thrust of virtual production right now is to pipe that camera position into Unreal (as done on The Mandalorian), and then display what the virtual camera in Unreal sees somewhere, be it a directors monitor, the physical camera’s monitor, or in The Mandalorian’s case, a giant screen.

This used to be an incredibly hard/expensive process, in part because there wasn’t really any affordable middleware that abstracted the hard parts away from a production. You couldn’t “just use Unreal”, you would have to build your own realtime-ish asset viewer (making game engines seems to be a theme for Rendered #1) from scratch.

Avatar did this though a giant Vicon-style camera tracking array that was able to see where the camera was and stream in proxies to a directors monitor. They developed their own streaming asset viewer.

Oblivion used an approach very approximate to the Mandalorian, but didn’t need to rely on a tracked camera position because the “background” was depicting a scene far enough away that you didn’t need to worry about parallax. So instead they rendered out a high quality back plate and played that back behind the actors.

Gravity pre-programmed a video scene inside a LED cube to match pre-programmed camera patterns. A downside of this is that the motion in the LED wall is “baked” - you can’t really improvise anything and all the motion has to be perfectly choreographed, otherwise the background and the lighting on a character’s face won’t light up.

The most recent Star Wars films used an approach similar to Oblivion in that they used projectors get “real” lighting for inside the Millennium Falcon.

What The Mandalorian does is bundle up all of the above, and then throw a ton of money at the problem. The reason nobody has done what they did before wasn’t because the ideas weren’t there, but mainly because it takes a studio like Disney to build four massive, high-resolution video walls and a company like Epic to leverage decades of game engine development to be able to deliver a robust third-party solution for realtime asset viewing. A lot of people have been approximating the same experience, but Disney was the one to finally put the pieces together and just do it.

It’s not a holodeck, it’s not a revolution, it’s just the next permutation of an idea that has been steadily evolving in film over the past decade.

Robert De Niro Get De-Aged for The Irishman

Speaking of evolving ideas — the recently-ish released film The Irishman used some pretty insane techniques to portray Robert De Niro’s character aging over time. When I say insane here, I also don’t mean “awe inspiring” — I think I’d more describe it as masochistic, and in a way that speaks to the divide that exists between the emerging spheres of virtual production and traditional production, mainly in how The Irishman approaches the problem it is trying to solve.

Problem: Robert De Niro in real life is older than the character he is meant to portray. How can we de-age him to make him look younger?

Traditionally, you do it all by hand. Yes you can guide it with some reference 3D scans, but at the end of the day someone is tweaking sliders around lip creases to try and get each frame to look right. I’m being a bit facetious here, but this is the general thrust of how you get an uncanny resemblance to Rachel in Blade Runner 2049, Leia in Star Wars: The Last Jedi, and Benjamin Button in The Curious Case of Benjamin Button.

From the FXGuide post on Rogue One:

A blendshape rig is the dominant approach to facial animation used throughout the industry.

The perpetual issue is that film is a 2D medium, but people (and faces) are 3D. To really get the representation right, you need to somehow derive a 3D model of a face from a 2D reference. The most advanced approaches to this problem will have actors sit in for a face scan in order to generate a 3D dataset of their face, and then VFX artists will use that asset library and build up a face that most closely matches the face/light/etc. that needs to be replaced in the corresponding shot. Custom tools exist at each step of this process to make it easier as well (Flux/Medusa/etc. mentioned in the video).

For deceased people or ones that otherwise can’t sit in a capture stage, you have to look at archival footage/video, and then uses those images as part of a very rough photogrammetry project to reconstruct their face from scratch.

For Leia, with Carrie Fisher’s blessing, the team sculpted a digital Leia solely from reference.

For The Irishman, the production company did… everything. The took De Niro and other cast members in for a scan at Light Stage. Did the archival work, and custom built their own IR stereo camera to get the lighting information (via disparity) from a scene.

Notably, through the use of their tool Flux, “The new Flux pipeline is designed to produce results without an explicit animation stage.” It sounds like they mostly automated the actual process of generating the 3D face from the 2D data.

What I think these approaches still miss though is that they still treat the act of filming as a fundamentally 2D process. You are likely not surprised to find that I think we should start treating filmmaking as a 3D process.

If the film set used an actual RGBD sensor (via Depthkit, or even a custom one), they would have been able to capture 3D data as a first priority, instead of having to derive it later. The IR sensor they built was also only really used to capture 3D lighting information! They derived the 3D from the 2D image! This is a huge inefficiency.

The issue is that 3D capture is seen as a mutually exclusive process from the 2D capture. If you’re on set, you’re doing normal 2D capture. If you’re off set, you’re doing 3D in a big capture dome, devoid of context. But, moving forward and in my mind, these will no longer be mutually exclusive processes. However, it will require DPs and other techs on set to understand the 3D capture process just as much as they understand 2D capture.

It’s also funny because, even considering all The Irishman did, one of the techs on the project, in the video linked above, states:

“The achievement is not wearing markers.”

All this work to get around not having to wear markers… c’mon. If the VFX industry is able to brute force their way into getting a 3D model from a 2D capture, I think that’s fine, but if all we’re trying to do to not get people to wear markers, I do wish some productions would try out using depth sensors more prominently for key capture — not just for reference, compositing and camera tracking.

I have more to say on face replacement in Hollywood (small tease), but I’ll have to save it for the next newsletter, as this one is already getting too long.

Custom Game Engines Guide

In the time before Unity/Unreal, to make a game meant to build an engine to make a game and then make the game with that engine. This meant that games creation was far less accessible than it is now. I think often of Chris Wade’s post in Gamasutra about why it’s hard to make a game, primarily the “inverse pyramid of complexity” that anyone who has made a game can understand:

Making you own engine is like its own mini pyramid inside that foundation section, but one just as wide as the top of the main pyramid. You just wanted to make a simple platformer, but ended up needing to learn about cross-platform graphics APIs. It’s easy to see how this was prohibitive.

However, custom engines still persist! In part because of the ways some studios operate/like to make games, it can be easier to build a tool that is custom made to create the game you want to make, instead of needing to bend a more general tool like Unity/Unreal to be the engine you want it to be.

Raysan5, the creator of the fantastic game framework raylib, put together a giant list/compendium of known custom game engines and the games they are used on, linking where possible to information about the engines themselves.

For anyone interested in game engines/game engine development, I highly recommend checking this out!

C# Source Generators

It’s not often a programming language gets a feature added to it that is worth talking about in a newsletter, but the announcement of C# getting metaprogramming/macros/whatever you want to call it through their introduction of “Source Generators” is big.

I recognize that other programming languages have very similar, and even more robust, permutations of this same idea, but why this is big in the context of C# is that C# is one of the most widely used languages for game development. This is largely due to the fact Unity is a behemoth in the space, but, if you look at the list of custom game engines above, many use some version of Monogame (a C# game framework), and other custom ones may use C# as well.

There’s a whole newsletter’s worth of reasons I think this matters, but I want to focus on a specific feature of Source Generators and shine a light on what I think its implications are.

Part of the Source Generator feature set is that they have the ability to load in arbitrary files before your code is complied. They can then parse that file and turn its contents into C# code. This means I could pass in a JSON file, and that gets converted to some C# representation of that JSON file.

One of the largest barriers to entry for people learning Unity is that, much to their chagrin and despite how much they were told “Unity is easy!”, they still need to write C# code. Imagine instead if that person was able to author a simple JSON file to describe what they wanted in their scene, feed it to some plugin, and then have a working scene in front of them, complete with logic, lighting, etc., all without needing to touch C#. You’d be able to author games/interactive experience/whatever without needing to code, but could have all the affordances of code at the same time.

This is the promise here. It’s a half step between needing to have a robust plugin that allows for realtime authoring (where logic has always been an issue), and writing pure C# code. I think, once Unity gets access to this feature of C#, we’ll see a lot of plugins utilizing something similar to the above scenario to do a variety of different things, from populating scenes, to setting up build pipelines, to managing serialization, etc.

Papers Review

In every issue, we hope to surface state-of-the-art research in adjacent fields that is likely to affect the future of volumetric capture, rendering, and 3D storytelling. To do this, I’m happy to have Or Fleisher on board to give us an overview of some really cool research that has emerged over the past few months. Take it away Or!

Thanks Kyle! Conferences around the globe are hit hard by the Coronavirus pandemic, many of which have been canceled or postponed (SXSW, GDC, E3, and more). However, with SIGGRAPH (July 19) and CVPR (June 13) going virtual we're seeing increasing amount of incredible research being published online — some of which actually tackles the inherent issues imposed on research by the pandemic.

NeRF: Representing Scenes as Neural Radiance Fields for View Synthesis

B. Mildenhall, P. Srinivasan, M. Tancik, J. Barron, R. Ramamoorthi, R. Ng

What are neural representations of scenes? Good question! This research presents a method for synthesizing new views given a set of input images with known cameras (Sound familiar? I am thinking about Photogrammetry too). The research explores the idea of modeling a scene as a radiance field, or, put simply, a function that takes in the position and direction of a view and returns the color that (imaginary) ray hits.

To do that, the research trains on a set of input images of a scene which embeds and optimizes the radiance fields. Upon training for a very long time you can then sample new views by providing a position and direction coordinates, which is nonetheless, mind-blowing 🤯.

Why this matters

Right now, this research could help reduce the amount of images needed for phtogrammetric reconstruction by synthesizing new views based on a small collection of input images and known cameras. Down the road, this is one of a few examples that suggest a radically different approach to rendering - instead of a traditional rendering pipeline that draws pixels on the screen based on known geometry, pixels here are the result of an optimization algorithm - meaning there is no sense of geometry in the rendering process.

3D Photography Using Context-aware Layered Depth Inpainting

M. Shih, S. Su, J. Kopf, J. Huang

I’ve seen this parallax effect before and wondered, how many hours of compositing were spent creating it? Although the results of this research are presented in somewhat cliché ways, the potential uses and implications of what it’s solving are big. The research presents a way to segment and in-paint occluded areas in RGBD captures. In some ways this work relates perfectly to NeRF (above) as it also uses view synthesis to fill occluded areas.

Why this matters

Many of today’s volumetric capture systems are limited by line of sight occlusions. For example, if someone holds up their hand in front of their face, you’re unable to see their face from that capture. You could use a different capture, but the issue is often much more subtle. The camera that is being occluded will often have the best view for some number of arbitrary viewing angles, but if I’m looking at that capture from a ten degree offset, even if it’s the best angle, I’m going to see missing data where I expect a face to be. While not the original intention of this research, in theory, it could be used to in-paint these missing parts. Or if nothing else, at least you can make cool GIFs?

AdversarialTexture

J. Huang, J. Thies, A. Dai, A. Kundu, C. Jiang, L. Guibas, M. Nießner, T. Funkhouser

Texture fusion from multiple views has been one of those longstanding research topics, and while there are a lot of approaches out there that work - I found this recent paper to provide a fresh take on using machine learning to try and tackle that problem. By employing a discriminator network that trains on pairs of “real” and “fake” (re-projected) images this approach is able to achieve good looking results for texture optimizations from RGB-D scans.

Why this matters

Blurry/”melting” textures have always been an issue with 3D scans. Especially if you happen to catch a shear angle, your texture can look spread like cream cheese. To anyone that knows this problem, this this paper suggest ways to finally (and consistently) get around this problem.

Resources

Kyle here again. In the sprit of trying to collectively grow the knowledge of everyone in this space, I want to share a few resources for people to check out each newsletter. These will take various forms, but ultimately should be something you can directly engage with now, without needing to set up a camera, program, etc.

Arcturus Worldwide Volumetric Studio Map

Arcturus, creators of Holosuite, have updated their map of the volumetric studios around the world! The map breaks down where studios are at globally, breaking them down by region and type (4DViews, Microsoft, etc.). Check it out here.

Scatter Resource Collection for Depthkit on Are.na

Scatter maintains a public collection of a ton of interesting links that are related to volumetric filmmaking, VR, and 3D scanning on Are.na. I’m sure I’ll highlight some of the resources linked here in future newsletters, but for now just dive in here!

Open Source Full Body Volumetric Capture Software

This has been on my radar for a bit but I’ve never known anybody to use it. They recently added in Azure Kinect support, which seems like a good as time as any to try it out.

Briefly Noted

These are smaller news items that I didn’t want to primarily feature but should still be just as interesting as some of the news featured above.

TetaVi raises $4m, partners with 8th wall to scan president of Israel, and partners with Crescent to build a volumetric capture stage in Japan. Occipital, creators of the Structure Sensor, are teasing something new. Intel released a few demos that show the Realsense LiDAR camera in action. The Realsense docs also have a surprisingly robust breakdown of depth image compression via colorization. Promising game engine The Machinery (made by ex Bitsquid/Stingray developers) released a new version of its beta. Paul DeBevec did a big breakdown of the most recent iteration of Light Stage for FXGuide. Ncam releases virtual production software for Unreal.

And with that we close out the first issue of Rendered! Thanks for much for reading the newsletter. Or and I both hope it was everything you hoped for, and if not, please send us feedback (and go ahead and send some feedback if you liked it too)! You can reply to this newsletter directly, or reach out to me at kyle.kukshtel@gmail.com.

Additionally, please share this newsletter around! Rendered thrives off of its community of readers, so the larger we can grow that pot of people, the better. Thanks for reading!